Par son communiqué du 8 avril 2026 (1), la DINUM a officiellement annoncé sa sortie de Windows pour adopter Linux sur ses postes de travail. Cette décision s’inscrit dans une stratégie globale de réduction des dépendances numériques extra-européennes, pilotée par le Premier ministre, le ministre de l’Action et des Comptes publics, et la ministre déléguée chargée de l’Intelligence artificielle et du Numérique. L’objectif est de renforcer la souveraineté numérique française et européenne, notamment face aux tensions géopolitiques et à la fin du support de Windows 10 en octobre 2025.

- lien nᵒ 1 : Communiqué de presse – Souveraineté numérique : réduction des dépendances extra-européennes

- lien nᵒ 2 : Les Numériques – La DINUM quitte Windows pour Linux

- lien nᵒ 3 : 01net – Souveraineté numérique : l’État français veut abandonner Windows pour Linux

- lien nᵒ 4 : Économie Matin – Souveraineté numérique : la France abandonne Windows pour Linux

- lien nᵒ 5 : NixOS sur Wiipedia

- lien nᵒ 6 : Clubic - L'article sur NixOS (Securix et Bureautix)

- lien nᵒ 7 : Direction interministérielle du Numérique

- lien nᵒ 8 : PDF du communiqué DINUM - l'État accélère la réduction de ses dépendances extra-européennes

- lien nᵒ 9 : Openburo, Le Standard Européen pour l'Orchestration de l'Espace de Travail

- lien nᵒ 10 : About The Software for Health Foundation

- lien nᵒ 11 : Open Interop Documentation

Sommaire

- Perspectives

- Espoirs d’un citoyen

- OpenBuro et Open-Interop

- Les parties prenantes

- Périmètre et calendrier

- Enjeux et défis

- Distribution GNU/Linux choisie par la DINUM

- L’OS est du NixOS à la sauce ANSSI, projet nommé Sécurix

- La bureautix bureautique est … multiple

- Le paradis informatique se cache dans les détails

Perspectives

A priori, même limitée pour un premier temps à la DINUM, cette décision d’abandonner Windows/Microsoft est à un stade très précoce. Les solutions techniques ne sont pas tout à fait définies, ni finies, ni abouties, ni validées.

Les adhérences (liens technologiques existants) avec des programmes externes ou non FOSS du ou des Systèmes d’informations de l’État ne sont pas définies, encore moins les migrations, et encore moins un calendrier ou un budget de l’ensemble.

David Amiel, ministre de l’Action et des Comptes publics : "[…] La transition est en marche : nos ministères, nos opérateurs et nos partenaires industriels s’engagent aujourd’hui dans une démarche sans précédent pour cartographier nos dépendances […]". (1)

C’est peut-être pour cela que dans un esprit volontariste ou inspiré du mode Agile, la DINUM sert de banc d’essai, de prototype.

En outre, les solutions alternatives au niveau européen à la bureautique Microsoft et à son éco-système applicatif ne sont pas uniques, et

par ailleurs dans des états divers d’avancement ou d’aboutissement, et parfois en conflit juridique, comme l’épisode OnlyOffice contre Euro-Office rapporté sur ce site. À ce sujet, le projet OpenBuro est apparu en 2026 (voir ci-dessous).

La DINUM coordonnera un plan interministériel de réduction des dépendances extra-européennes. Chaque ministère (opérateurs inclus) sera tenu de formaliser son propre plan d’ici l’automne, portant sur les axes suivants : poste de travail, outils collaboratifs, anti-virus, intelligence artificielle, bases de données, virtualisation, équipements réseau. Ces plans d’action permettront de donner de la visibilité quant aux besoins de l’Etat à la filière industrielle du numérique, qui dispose d’atouts majeurs qu’il convient de valoriser par la commande publique. (1)

Bref, ce n’est pas le début effectif d’une migration globale, mais peut-être le commencement de la suite du début des préliminaires (ex 2022: Le poste de travail Linux : un objectif gouvernemental ?), avec une déclaration d’intention et un premier objectif de migration des postes de travail de la Dinum, et d’un début d’organisation et mise en marche. Dans un contexte budgétaire contraint, le FOSS souverain sera-t-il un avantage, ou bien une victime de la prochaine campagne présidentielle qui enterrera le sujet?

Il dit explicitement que des plans restent à faire d’ici l’automne (2026; j’espère ;-) ) pour réduire les dépendances extra-européennes. (1)

C’est une formulation peu précise, peu datée, peu budgétée pour le moins.

Espoirs d’un citoyen

Nous verrons bien l’évolution effective, pour moi, les acteurs+décideurs de la migration de la Gendarmerie il y a presque 20 ans (voir par exemple l’article LinuFr Le poste de travail du gendarme sous GNU/Linux Ubuntu ) resteront mes références héroïques de l’OpenSource en milieu professionnel de l’État, plus que l’épisode apparemment avorté des députés sous Linux en 2007.

Je suivrai ceci avec pas mal d’espoir, le citoyen en moi accompagne ce projet de ses voeux de réussite, avec ses objectifs globaux, entre autres plus d’indépendance dans notre parcours politique, favoriser la liberté de l’information, la sécurité informatique, et peut-être des économies récurrentes de dépenses publiques en licences et prestations, et enfin une plus grande diffusion du FOSS dans toute la France. À ce propos, j’aimerais bien que l’État français finance fortement le FOSS, au moins sur les logiciels que l’ANSSI recommande dans son Socle Interministériel des logiciels libres.

OpenBuro et Open-Interop

Le communiqué de la DINUM rappelle le lancement en 2026 de OpenBuro et Open-Interop. OpenBuro se veut être le standard européen ouvert qui veut concurrencer Microsoft 365 et Google Workspace, par l’orchestration entre les applications. OpenBuro a été lancé au FOSDEM 2026 à Bruxelles par la DINUM et LINAGORA (Twake Workplace). Il doit être un standard ouvert qui unifie les applications open source ou pas en une véritable plateforme, une alternative à Microsoft 365, sans verrou, sans remplacement brutal de l’existant.

OpenInterop est une brique logicielle open source d’interopérabilité de la Software for Health Foundation, qui est une organisation à but non lucratif qui promeut des logiciels open source pour la santé, avec un accent particulier sur les pays à revenu faible ou intermédiaire et sur le renforcement des compétences locales. Elle se présente aussi comme une structure qui veut rendre les solutions numériques de santé durables, abordables et indépendantes de fournisseurs tiers.

Les parties prenantes

La direction interministérielle du Numérique (DINUM) est une direction de l’administration publique française. Service du Premier ministre, elle est placée sous l’autorité du ministre de la Transformation et de la Fonction publiques. Elle a pour mission d’élaborer la stratégie numérique de l’État et de piloter sa mise en œuvre. Elle est considérée comme la direction des systèmes d’information de l’État.

La direction des achats de l’État (DAE) est une direction des ministères économiques et financiers. Elle définit et met en œuvre la politique des achats de l’État, à l’exception des achats de défense et de sécurité. Le pilotage des marchés interministériels, le conseil auprès des ministères et la professionnalisation des acheteurs sont quelques-unes de ses autres missions.

La direction générale des Entreprises (DGE) est une direction de l’administration publique française, rattachée au ministère de l’Économie et des Finances. Elle conçoit et met en œuvre les politiques publiques qui concourent au développement des entreprises.

L’agence nationale de la sécurité des systèmes d’information (ANSSI) est un service rattaché au secrétariat général de la Défense et de la Sécurité nationale, autorité chargée d’assister le Premier ministre dans l’exercice de ses responsabilités en matière de défense et de sécurité nationale, et a charge de la sécurité des systèmes d’information nationaux. L’ANSSI est l’autorité nationale en matière de cybersécurité et de cyberdéfense en France, ses missions sont : défendre, connaître, partager, accompagner, réguler.

Malheureusement personne ne représente directement les citoyens dans cette démarche.

Périmètre et calendrier

La DINUM, qui compte environ 250 postes, sera la première à migrer vers Linux. Chaque ministère, ainsi que ses opérateurs, doit formaliser un plan de réduction des dépendances extra-européennes d’ici l’automne 2026. La migration concerne aussi les outils collaboratifs, avec le déploiement de la Suite Numérique (Tchap, Visio, messagerie souveraine, stockage de fichiers, etc.), déjà testée par 40 000 agents.

Enjeux et défis

Cette migration est présentée comme un chantier d’ampleur inédite, avec des obstacles techniques et organisationnels importants. La DINUM coordonnera un plan interministériel, en collaboration avec l’ANSSI, la DGE et la DAE, pour identifier les dépendances et définir des solutions souveraines. Des rencontres industrielles du numérique sont prévues en juin 2026 pour concrétiser des alliances public-privé autour de la souveraineté européenne.

Distribution GNU/Linux choisie par la DINUM

La DINUM a choisi la distribution NixOS pour équiper ses 250 postes, car c’est une distribution qui est distribuable par scripts (ce qui fait que chaque poste est identique). En référence au monde d’Astérix le Gaulois, les éléments sont nommés Sécurix et Bureautix.

L’OS est du NixOS à la sauce ANSSI, projet nommé Sécurix

Le système d’exploitation pour la DINUM, Securix sur Github, c’est donc un NixOS modifié pour supprimer l’authentification par mot de passe classique au profit de clés matérielles FIDO2, en suivant les recommandations relatives à l’administration sécurisée des SI de l'ANSSI – l’Agence nationale de la sécurité des systèmes d’information.

La bureautix bureautique est … multiple

Dans des exemples de démonstration de la configuration de Bureautix sur Github, la liste des logiciels préinstallés, on compte trois suites Office: LibreOffice, OnlyOffice et WPS Office. Sans doute pour pouvoir disposer de plusieurs solutions pour ouvrir des documents de la suite Microsoft Office 365, qui est toujours une opération délicate et parfois décevante.

Le paradis informatique se cache dans les détails

Espérons qu’un beau jour, si Securix et Bureautix sont adoptés et généralisés, il faudra envisager de les exfiltrer des serveurs Github sous la coupe du grand Satya de Microsoft s’ils y sont développés vers une forge souveraine comme au Pays Bas ou en Allemagne.

Télécharger ce contenu au format EPUBCommentaires : voir le flux Atom ouvrir dans le navigateur

Plus que quatre semaines avant la conférence annuelle de la communauté open source OW2, les 2 et 3 juin 2026, à Paris-Châtillon !

Pour cette édition, l’association met l’accent sur la souveraineté numérique européenne. Dans un contexte où l’Union européenne renforce son autonomie en matière de technologies, de ressources et de services numériques sécurisés, l’open source et les modèles ouverts apparaissent comme des leviers essentiels de l’indépendance technologique. À travers une trentaine de conférences de haut niveau, OW2con explorera le rôle stratégique de ces approches dans la construction d’un écosystème numérique souverain.

- lien nᵒ 1 : Page d'accueil de la conférence

- lien nᵒ 2 : Lien d'inscription

- lien nᵒ 3 : Programme

Les temps forts de la conférence incluent :

- 5 intervenants « keynotes » de renommée internationale : Valerie Aurora, co-fondatrice de l’internet resiliency club d’Amsterdam ; Emiel Brok, Sovereignty Ambassador, SUSE / DOSBA ; Martin Häuer, Board, Open Source Imaging Initiative ; Matthias Kirschner, Président, FSFE ; Jean-Louis Le Roux, Senior Vice Président, Orange Intl. Networks Infrastructures and Services (OINIS)

- 1 pays invité : l'Allemagne

- 4 ateliers parallèles « Breakout Sessions » autour des thémes : Governance open source avec l'OSPO Alliance ; Cyber Resilience Act (CRA) avec le CNLL et inno³ ; Zapp Accelerator Meetup avec la communauté NGI ; Open source dans l'Education, science et recherche avec la Fondation Apereo.

- Un débat de cloture autour du thème : « De la souveraineté numérique à l’indépendance technique », animé par Emiel Brok.

L’ensemble de la conférence a lieu en anglais. L’agenda inclut divers moments d’échange, et réseautage lors des pauses, de la cérémonie des « OW2 best project awards », et d’un cocktail en fin de première journée.

Grâce au soutien des sponsors, l’accès à la conférence est gratuit, mais l’inscription est obligatoire. Si vous deviez annuler votre présence merci de nous prévenir.

Télécharger ce contenu au format EPUBCommentaires : voir le flux Atom ouvrir dans le navigateur

Tous les ans depuis 2011, Code Lutin apporte un soutien financier à des initiatives promouvant les valeurs du Libre. Longtemps appelé « Mécénat Code Lutin », nous avons décidé de nous allier à l’initiative Copie Publique afin de changer le monde ensemble.

Parmi les bénéficiaires des années précédentes, nous pouvons citer Panoramax, Lemmy, HackInScience, PeerTube, YunoHost, Interhop… et tellement d’autres ! Vous trouverez la liste complète sur le site de copie publique.

- lien nᵒ 1 : Site Copie publique

- lien nᵒ 2 : Site de Code Lutin

- lien nᵒ 3 : Formulaire de candidature

- lien nᵒ 4 : Compte Mastodon Copie Publique

Comment ça se passe chez Code Lutin ?

Cette année encore, nous avons décidé d’ouvrir les candidatures au public. Si vous avez un projet ou une organisation dont l’objet correspond aux thèmes listés ci-dessous, n’hésitez pas à postuler.

- développer un logiciel ou une bibliothèque libre ou open-source ou travail de conception d’interface, traduction, documentation… ;

- initiative en faveur du développement et de l’adoption de standards ouverts ;

- initiative visant à lutter contre les brevets logiciels ou la surveillance de masse ;

- initiative visant le développement d’un Internet neutre, indépendant et décentralisé ;

- initiative visant à proposer des alternatives aux GAFAM ;

- production de contenus multimédias distribués sous licences libres, reversés dans les communs ;

- et, d’une façon générale, toute initiative visant à promouvoir la production, la culture Libre.

Et fidèle à nos valeurs, nous fonctionnons démocratiquement en désignant les bénéficiaires par un vote selon le principe « 1 Personne = 1 Voix » auquel tous les salariés peuvent participer.

Dites m’en plus !

Pour postuler, c’est simple et rapide. Il vous suffit de remplir le formulaire disponible au lien suivant https://framaforms.org/appel-a-projets-copie-publique-2026-de-code-lutin-1772533371

Vous avez jusqu’au 17 mai prochain minuit pour postuler ! N’hésitez plus.

Télécharger ce contenu au format EPUBCommentaires : voir le flux Atom ouvrir dans le navigateur

Cette revue de presse sur Internet fait partie du travail de veille mené par l’April dans le cadre de son action de défense et de promotion du logiciel libre. Les positions exposées dans les articles sont celles de leurs auteurs et ne rejoignent pas forcément celles de l’April.

- [l'Humanité.fr] Et si les USA nous débranchaient: «Il faut repenser notre rapport à la technologie», analyse la présidente de l'April (€)

- [The Conversation] «Dégoogliser» l'éducation? Le modèle alternatif de PeerTube

- [ZDNET] Requiem pour 300 millions d'ordis: obsèques contre l'obsolescence programmée chez Microsoft

- lien nᵒ 1 : April

- lien nᵒ 2 : Revue de presse de l'April

- lien nᵒ 3 : Revue de presse de la semaine précédente

- lien nᵒ 4 : 🕸 Fils du Net

[l'Humanité.fr] Et si les USA nous débranchaient: «Il faut repenser notre rapport à la technologie», analyse la présidente de l'April (€)

✍ Pierric Marissal, le jeudi 30 avril 2026.

Pour sortir de notre dépendance au numérique états-unien, remplacer un Google par un équivalent européen est voué à l’échec. Il faut penser d’autres modèles non prédateurs, à l’image du logiciel libre, nous explique Magali Garnero, présidente de l’April (Association pour la promotion du logiciel libre) et membre de Framasoft.

Et aussi:

[The Conversation] «Dégoogliser» l'éducation? Le modèle alternatif de PeerTube

✍ Laurent Tessier, le lundi 27 avril 2026.

Si les outils des Gafam sont très présents sur les ordinateurs des élèves et de leurs professeurs, des solutions libres sont développées dans l’éducation nationale. Quelques exemples.

[ZDNET] Requiem pour 300 millions d'ordis: obsèques contre l'obsolescence programmée chez Microsoft

✍ Thierry Noisette, le lundi 27 avril 2026.

Sept associations, dont l’April et Que Choisir Ensemble, ont manifesté par des funérailles symboliques devant le siège de Microsoft France. Elles dénoncent le gaspillage forcé par le passage à Windows 11, OS pour lequel de nombreux ordinateurs ne sont pas compatibles.

Et aussi:

- [Les e-novateurs] Des centaines de millions d'ordinateurs symboliquement enterrés devant Microsoft France

- [la Croix] Microsoft: les associations se mobilisent face au gâchis de l'arrêt de Windows 10 (€)

Commentaires : voir le flux Atom ouvrir dans le navigateur

Comme vous le savez sans doute, cette année est marquée par les 35 ans de GNUstep, qui est à la fois un cadre logiciel qui permet de développer en objective-C des applications portables sur Windows, MacOS et GNU/Linux, mais aussi un environnement d’exécution (runtime) de ces mêmes applications.

Plusieurs projets de bureau compatibles avec GNUstep existent depuis quelques années: après les défunts Simply-GNUstep et Étoilé, citons les actifs GSDE développé par Ondrej Florian ou encore le plus ambitieux NEXTSPACE de Sergii Stoïan, qui tend à reproduire fidèlement l’ergonomie d’OPENSTEP sur BSD ou GNU/Linux. Plus récemment, dans un style plus proche de MacOS, citons également les prometteurs bureaux Gershwin (pour Xorg) ou Ambrosia (pour Wayland) développé par James Carthew.

Le bureau Agnostep propose sa version BETA 2.0.0, dans un style plus classique, avec des menus verticaux à la NeXT, combinant Window Maker et GWorkspace, ainsi que le runtime classique de GNUstep.

- lien nᵒ 1 : GNUstep

- lien nᵒ 2 : Agnostep Desktop

Il n’en propose pas moins un thème moderne inspiré par le jeu d’icônes du projet Papirus. Bien que fondé sur une distribution Debian Lite, il ne fournit pas de paquets, mais un principe d’installation proche des ports BSD. Un assistant facilite l’installation initiale comme l’ajout d’applications supplémentaire afin de fournir les versions les plus récentes des applications de la communauté GNUstep, compilées depuis les sources. En effet, contrairement à d’autres projets qui divergent parfois tellement des sources originales, qu’il devient impossible de les reverser dans le lot commun, la philosophie d’Agnostep est d’échanger patiemment avec la communauté des développeurs afin que les problèmes constatés et les améliorations bénéficient à tout le monde.

De plus, ayant résolu certains problèmes de la version précédente, il présente une meilleure stabilité. Outre les applications notoires de l’écho-système GNUstep, comme GNUMail, SimpleAgenda, etc., il offre également une nouvelle collection d’applications GNUstep originales créées dans ce but afin de proposer une expérience utilisateur plus cohérente:

- Meteo.app : une application dockée qui affiche aussi la date courante. Basée sur l’API wttr.in API d’Igor Chubin.

- UpMem.app : affiche la durée d’exécution l’usage de la mémoire.

- Updater.app : une application dockée avec un badge de notification pour alerter en cas de paquets Debian susceptibles de mise à jour. Ce qui permet aussi d’effectuer la mise à jour effective à partir de la liste affichée de ces paquets

- Birthday.app : une application dockée avec un badge pour informer des événements familiaux. Un incontournable pour le grand-père de nombreux petits-enfants.

- OpenDisk.app : ouvre les dossier

mediaoù sont montés les disques amovibles : un compagnon de wmudmount et de udisks2, en se dispensant d’afficher le bureau de GWorkspace. - Launcher.app : un moyen rapide d’afficher le dossier des applications dans une nouvelle fenêtre.

- ScreenLock.app : un simple verrouilleur d’écran fondé sur xtrlock.

- Pass.app : une interface GNUstep au programme Unix Password Manager donnant accès au coffre local des mots de passe.

- Mixer.app : une version simplifiée et compatible ALSA du mixer dérivé de VolumeControl.

- AgnostepManager.app : un assistant dans la compilation et l’installation d’applications supplémentaires : applications courantes, utilitaires, jeux, outils de développement.

- Dico.app : un service et un outil de recherche dans un Dictionnaire français fondé sur le DVLF de l’Université de Chicago.

- SaveLink.app : un gestionnaire de raccourcis Internet. Voyez le dossier des Favoris.

À partir de cette version, les manuels d’aide (format .help) seront fournis avec chaque application concernée grâce aux améliorations récentes de l’application HelpViewer. Autre exemple qui illustre les fructueux échanges avec la communauté.

Agnostep est initialement développé sur un Raspberry Pi 500, mais son code permet de l’installer sur n’importe quel ordinateur susceptible d’accueillir la distribution GNU/Linux Debian : d’où son nom. Agnostep est un téléscopage de agnostique et GNustep.

Télécharger ce contenu au format EPUBCommentaires : voir le flux Atom ouvrir dans le navigateur

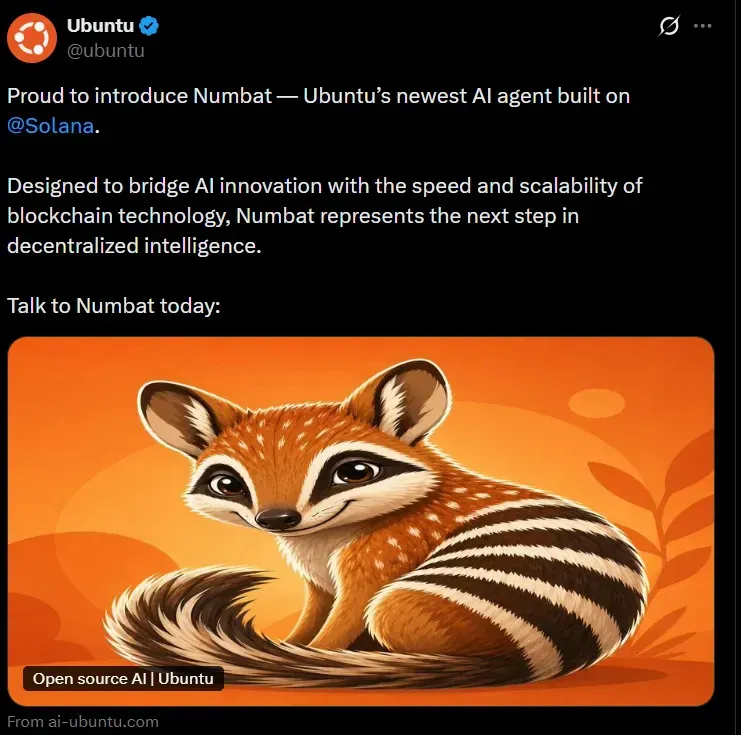

It seems like Ubuntu cannot catch a break.

Their entire web infrastructure was under continued DDoS attack for 5 days. Which seemed to be over now. But the misery is not.

A few hours ago, there was a (now deleted) tweet from Ubuntu's official Twitter account. It announced the availability of Ubuntu's newest AI agent.

At first glance, it looked legit until you dug deeper.

Ubuntu's official Twitter account was compromised

The tweet looks legit, right? At least it plays with the human psyche.

It talks about AI, which relates to Ubuntu's recent AI move. This could trick many people who might believe that this is a legit next step in the AI direction.

It was mentioned to be built on Solana and the account was also tagged. Solana is a legit open-source blockchain platform for digital transactions and decentralized applications (read crypto payments).

This is why the next line mentions buzzwords like Blockchain and decentralized. Blockchain also relates to crypto so this was more like a build up for crypto that would come later.

The so-called agent is called Numbat and the main image shows the Numbat animal with orange as its primary color. "Numbat" is also part of Ubuntu 24.04 codename Noble Numbat.

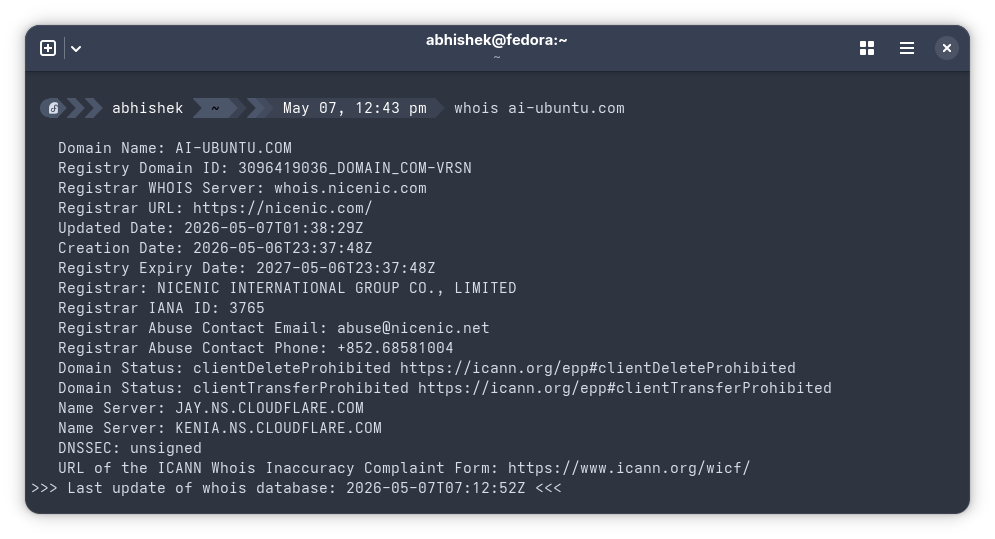

And then the displayed URL is ai-ubuntu.com which is similar to ai.ubuntu.com although ai subdomain doesn't exist on Ubuntu but it is enough to trick unsuspecting people.

Mind that it was not a single tweet; it was a thread (a series of nested tweets) and the replies were closed. So even if someone discovered the scam, they wouldn't have been able to alert others in the replies.

So, fake AI branding, Ubuntu's Numbat name, Solana tags, blockchain buzzwords, and a near-identical URL to quietly build false trust and thus guiding unsuspecting users step by step into a crypto scam before they realize the deception.

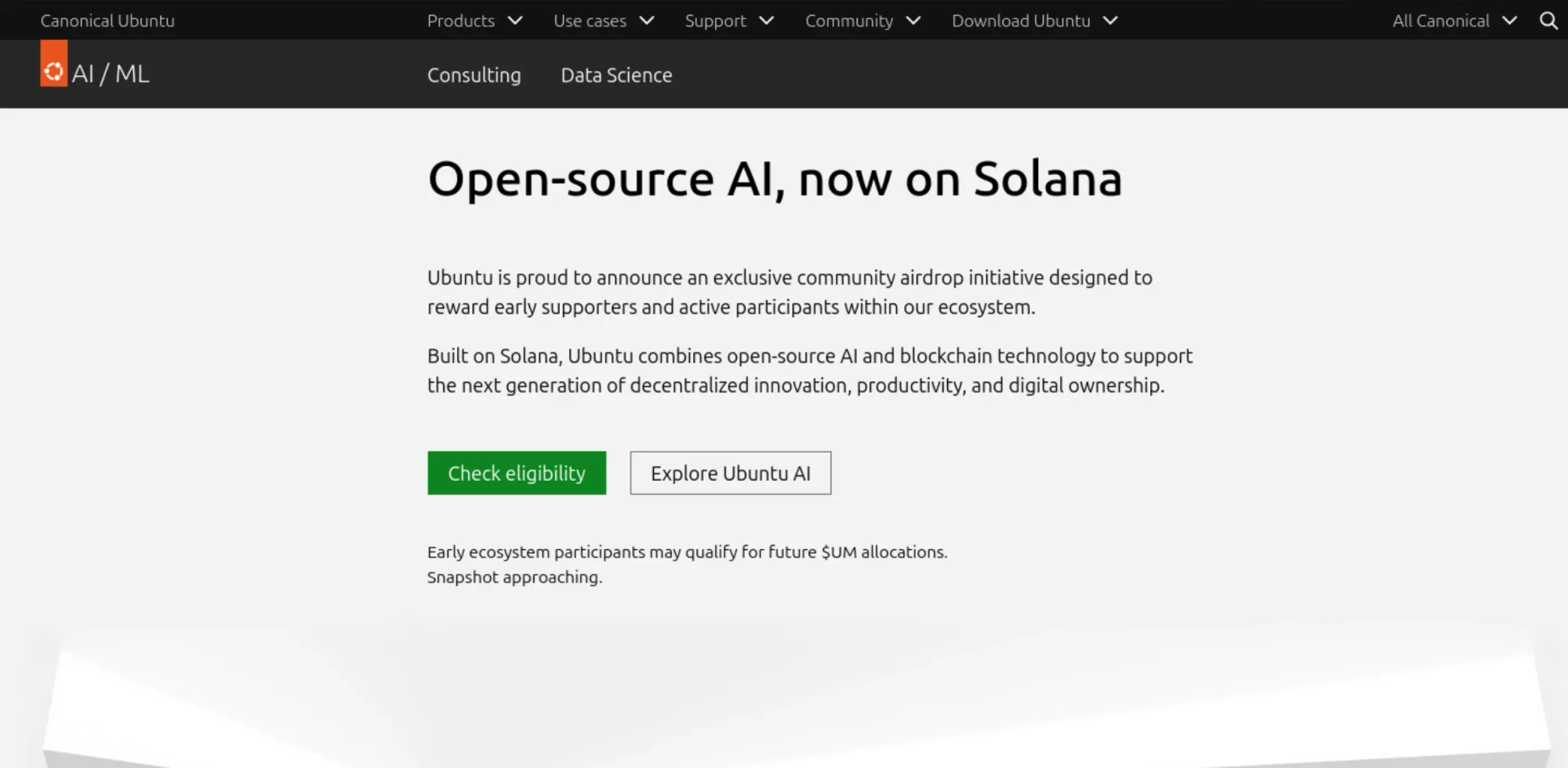

The next step of deception came when the link was clicked.

The crypto trap

Like most of the briefly compromised accounts, this tweet also tried to lure people into a crypto scam. It was not evident immediately unless you clicked on the given URL. And boy that URL looks like a typical Canonical webpage.

It is not impossible to get fooled by the clever webpage if you are not paying attention. Your guards would have been down because you clicked a link shared by official Ubuntu account.

The rest of the page had links to actual Ubuntu project and thus making it look even more legit.

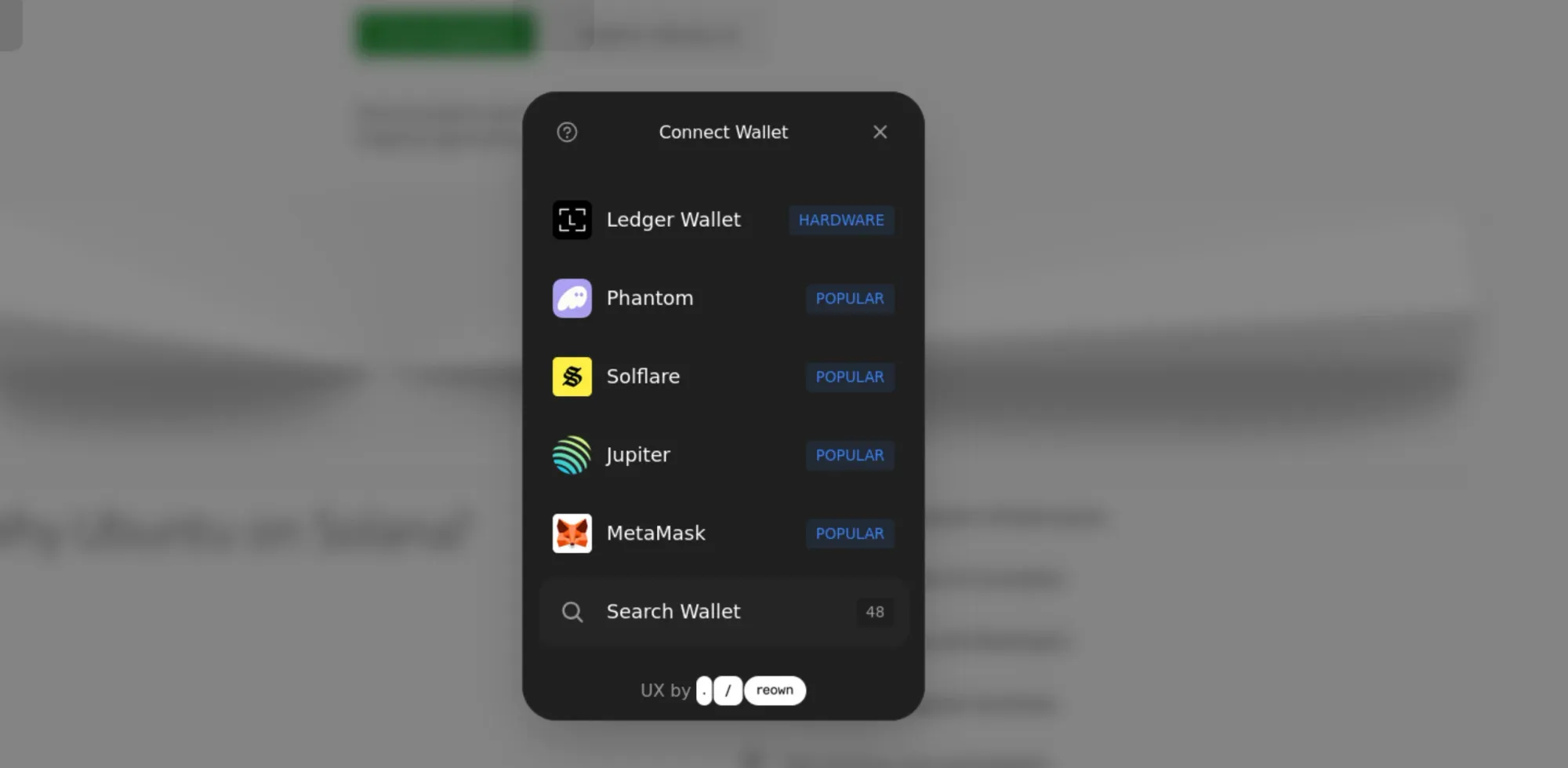

It was only when you clicked the "Check eligibility" or "Explore Ubuntu AI" buttons, the deception was evident. It asked you to add your crypto wallet.

Why would you do that? Because just before the buttons, there is a text that says:

Early ecosystem participants may qualify for future $UM allocations. Snapshot approaching.

This compromised tweet just adds to the pile of misery Canonical had been suffering of late and it didn't happen in isolation.

The DDoS attack that crumbled Canonical's web assets

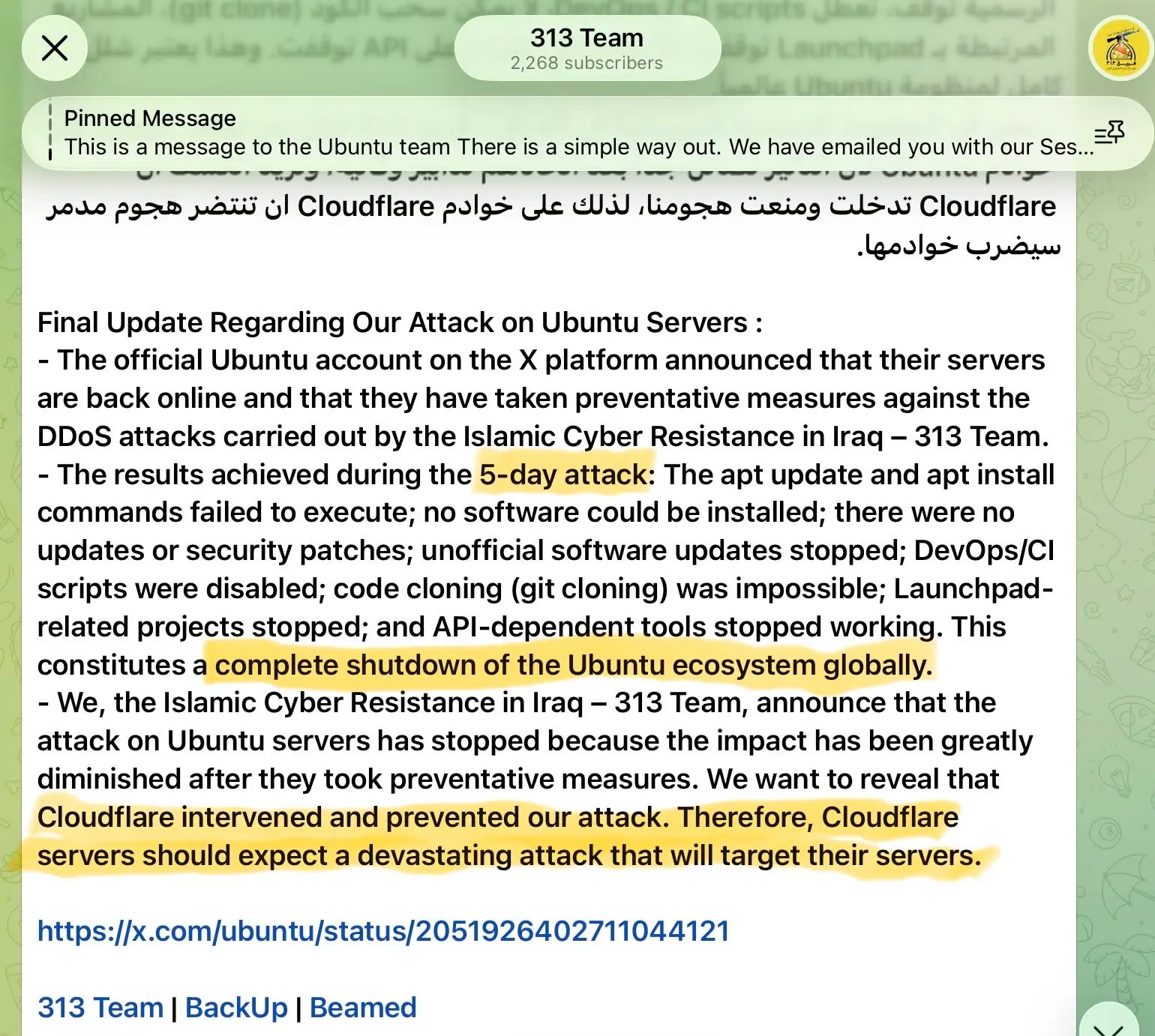

In case you didn't know, Ubuntu was suffering from a large scale DDoS attack. Ubuntu's websites went down for about five days last week but they seem to be back now.

Starting April 30, Canonical's web services faced what the company described as a "sustained, cross-border" attack. The ubuntu.com website, Snap store, Launchpad, and several other Canonical-owned services went offline or became unreliable.

The attack lasted until around May 5, when services were gradually restored. At the time of writing this, Canonical's official status page shows everything fully operational. Let's hope it stays that way.

Note that DDoS attacks make a website unavailable by flooding the server with traffic. It didn't compromise the servers. So, your Ubuntu installation, package updates (APT repositories are mirrored across the world and kept working), ISO downloads, and the Ubuntu operating system itself was not impacted. Your system was never at risk. Although, if you had trouble running snap install commands or pulling from a PPA last week, you now know why.

Canonical has not released a detailed post-incident report yet. A Pro-Iran hacker group called 313 reportedly claimed responsibility, but this has not been confirmed by Canonical.

Are both incidents are connected?

The hacker group 313 has announced that they have ended the DDoS attacks. They have not mentioned anything about compromised tweet.

Now, ai-ubuntu.com was registered with a Hong Kong based registrar, but that doesn't mean the attackers were based in Hong Kong.

One thing to note here is that many organizations as well as individual accounts often use third-party tools to manage and schedule their tweets. It is also possible that the compromise came from such a third-party Twitter tool. This could also be a human slip up and their social media manager's account might have compromised.

It is really up to Canonical to investigate and find out the root cause. We can only make guesses.

The Google Home Mini launched in 2017 as Google's smallest, cheapest smart speaker. Millions were sold, handed out, and given away as promotional gifts.

Many of them still work, but it being in the last phase of its lifecycle means that while it still functions for basic tasks, it doesn't have any kind of customizability or local processing capabilities.

The hardware was fine for the time but has become less relevant in Google's lineup over time, with the Nest Mini, its successor, also discontinued. And more recently, there's been talk of new Gemini-powered smart speakers.

But what if you could bring your Home Mini (1st Generation) device up to 2026 standards with local processing by paying only $85?

Tired of AI fluff and misinformation in your Google feed? Get real, trusted Linux content. Add It’s FOSS as your preferred source and see our reliable Linux and open-source stories highlighted in your Discover feed and search results.

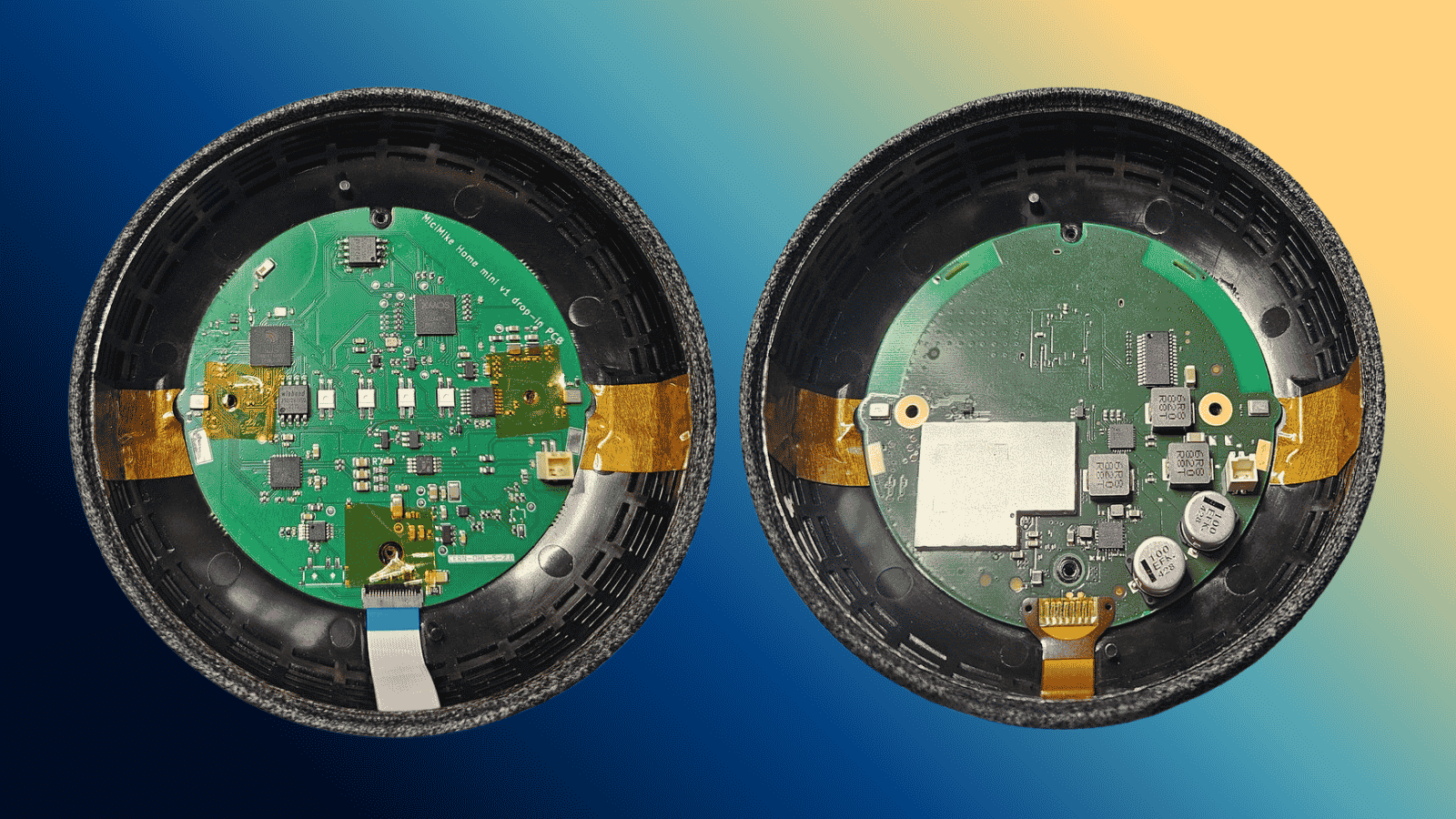

Add us as preferred source on Google📝 MiciMike Home Mini PCB: Key Specifications

Two chips do the heavy lifting on this board. You get an Espressif ESP32-S3 as the main processor, paired with an XMOS XU316 chip dedicated entirely to audio. The Espressif unit brings 8 MB of PSRAM and 16 MB of flash to the table, while the XMOS one carries 4 MB of its own.

The ESP32-S3 covers Wi-Fi, Bluetooth, and wake word detection via microWakeWord, with none of the voice data leaving your device. Audio cleanup falls to the XU316, which runs through two on-board microphones to scrub out noise and echo before anything gets processed.

And the Home Mini's original speaker still works, which can be plugged back in via the included FPC cable.

For software, ESPHome is already preinstalled, ready to work with Home Assistant's Assist, Music Assistant, and Snapcast. A cloud LLM can also be dropped in as the conversation agent if you want one, but the whole thing runs fine without it.

Plus, the mute button on the device makes a physical disconnection at the hardware level, following what the original Home Mini did. You will likewise find four SK6812 RGB LEDs (for reference) sitting in the same positions, acting as status indicators.

Here are the full specs for you to go through:

- Main processor: ESP32-S3 (dual-core Xtensa LX7, 240 MHz), 8 MB PSRAM, 16 MB flash.

- Audio processor: XMOS XU316, 4 MB flash.

- Microphones: 2× MEMS (placed in the same location as the Home Mini)

- LEDs: 4× SK6812 RGB

- PCB: 4-layer, 72 × 70 mm, HASL lead-free.

- Connectivity: Wi-Fi 802.11 b/g/n (2.4 GHz) and Bluetooth 5.0 LE.

- License: CERN-OHL-S v2

Get Yours

At $85, the MiciMike board is available on Crowd Supply, with orders estimated to ship around October 1, 2026. US shipping is free, but international buyers must pay an additional $12.

The company behind it is the Ireland-based MiciMike ReV Devices, led by Imre László, who has put up the schematics, PCB design files, and the Bill of Materials on GitHub. The boards themselves are manufactured by Elecrow, a Shenzhen-based outfit behind a range of DIY and maker-focused hardware that we have covered a fair bit.

Before you go, know that there are plans for a drop-in replacement PCB for the Nest Mini. You can read about it on the official website.

MiciMike Home Mini PCB (Crowd Supply)👉 Related project you can explore: AsteriodOS is giving new life to old smartwatches.

Before we dive into the topic at hand, you should know that Euro-Office is a new European productivity project by Nextcloud and IONOS, which was forked from ONLYOFFICE.

It is a self-hosted, web-based office suite built for organizations and governments that want collaborative document editing on their own infrastructure. A big part of it is to move away from an office suite with ties to Russia, which has triggered concerns over digital sovereignty.

Following that, The Document Foundation (TDF), the nonprofit behind LibreOffice, had put forward a question, asking what document format this suite would use as its native format.

They have received no reply and have put out a thank-you post to ODF contributors while taking a dig at Euro-Office's silence.

TDF isn't happy

Toward the end of March, TDF published an open letter to European citizens arguing that digital sovereignty is not as simple as switching office software vendors. Real sovereignty, TDF said, requires open document formats, open fonts, and continuity of expertise, none of which come automatically with a vendor switch.

Then came the issue of OOXML versus ODF. OOXML, the format used by Microsoft Office, is designed and controlled entirely by Microsoft. Any office suite that defaults to OOXML compatibility is still structurally dependent on decisions made in the U.S., regardless of where it is hosted.

ODF, the Open Document Format, is what TDF wants Euro-Office to commit to instead. It is an ISO standard, developed openly without a single company controlling it.

They also noted that Euro-Office's launch press release made no mention of ODF as a native format and asked publicly whether it would be the default for documents created and shared between European public bodies.

What does this mean?

Euro-Office's GitHub does list ODF formats alongside DOCX, PPTX, and XLSX, so it's not like they've excluded open formats entirely. But their FAQ frames the whole thing around "great MS compatibility," which is a problem.

Supporting a format and making it your native default are two different things. The distinction is relevant for any European institution that actually wants to break the dependency on Microsoft rather than just move it to a different server rack.

Whether Euro-Office addresses this directly or keeps quiet, TDF's question is now out there. And given that Germany has already mandated ODF by law, it's not a question that's going away anytime soon.

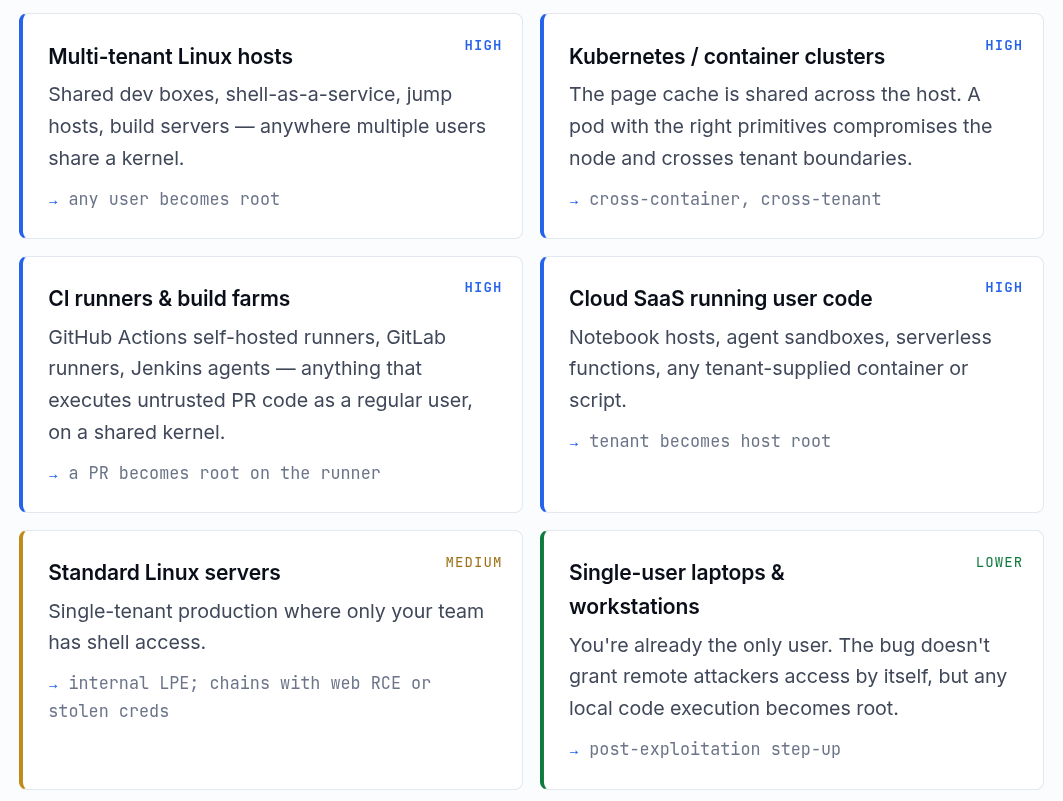

- A 9-year-old bug was discovered recently.

- The vulnerability is already patched in the Linux kernel.

- Normal users could gain root access by running a small Python script.

- Not much of a bother for regular desktop Linux users who keep their systems updated.

- Could be problematic for cloud servers and containers if the kernel is not updated.

A logic flaw that sat quietly in the Linux kernel since 2017 has finally been found and disclosed. For a brief window, it let any unprivileged local user on a Linux system escalate to root with a script smaller than most config files.

The flaw is in a kernel subsystem that lets regular programs tap into built-in cryptographic functions. By feeding it file data in a specific way, an attacker can get the kernel to quietly overwrite 4 bytes of any file's in-memory copy.

The actual file on disk stays intact the whole time, so any tool checking file integrity will see nothing wrong. The exploit is just a 732-byte Python script that doesn't require any additional dependencies or compilation.

The vulnerability is tracked as CVE-2026-31431, goes by the name "Copy Fail," and was discovered by researchers at Theori using their AI security research tool, Xint Code.

The security researchers tested it on Ubuntu 24.04 LTS, Amazon Linux 2023, RHEL 10.1, and SUSE 16, getting root on all four with the exact same script each time.

They had reported the issue to the Linux kernel security team on March 23, received acknowledgment the next day, and had a patch proposed and reviewed by March 25. The fix was committed to mainline on April 1, with the CVE assigned on April 22, and public disclosure following on April 29 (linked earlier).

Who needs to worry, and who doesn't?

According to the Copy Fail website hosted by Theori, the risk level varies quite a bit depending on how you run Linux.

At the top are multi-tenant Linux hosts, Kubernetes and container clusters, CI runners and build farms, and cloud SaaS environments running user-supplied code.

These all get a "High" risk rating. Containers and cloud workloads are especially exposed because the Linux page cache, the part of memory this exploit corrupts, is shared across the entire host, container boundaries included.

A compromised container can take down the whole node, and a bad pull request run on a shared CI runner could hand an attacker root on that machine.

Standard Linux servers where only the team running it has shell access get a "Medium" rating, whereas personal desktops and laptops are at the bottom with a "Lower" risk rating.

Copy Fail needs local code execution to work, so it won't get anyone in remotely by itself. If malware is already running on your machine, this could be used to escalate to root, but that's a bigger problem either way.

To fix this, patching the kernel is the way. Most major distros have updates out or on the way. If patching isn't immediately possible, Theori recommends blacklisting the algif_aead kernel module as a stopgap:

echo "install algif_aead /bin/false" > /etc/modprobe.d/disable-algif-aead.conf

rmmod algif_aead 2>/dev/nullAs of writing, Microsoft has noted that exploitation remained "limited and primarily observed in proof-of-concept testing," so there's no confirmed mass-scale campaign just yet.

That said, CISA, the US cybersecurity agency, has added Copy Fail to its Known Exploited Vulnerabilities (KEV) catalog, ordering US federal agencies to patch their Linux systems by May 15.

It also urged other organizations to treat it as a priority regardless of whether the federal deadline applies to them.

Suggested Read 📖: VS Code Was Adding Copilot as a Git Co-Author Without Telling Anyone

Back in November 2025, Jan Vlug, a software engineer who writes for the Dutch government's developer portal, put out a detailed blog recommending which Git forge the Netherlands should adopt for its governmental source code hosting needs.

His post came at a time when the Ministry of the Interior (BZK) was already setting up a dedicated Git instance, and the platform decision was still open.

Currently, the Dutch government's code is spread across GitHub and GitLab, neither of which is under government oversight.

GitHub got ruled out first because it's proprietary software, which directly conflicts with the government's own policy of preferring open source when options are equally suitable.

GitLab made it further in the evaluation but didn't survive it either. The issue was its open-core model, where the Community Edition is genuinely free software but the Enterprise Edition is not.

The solution

Forgejo came out on top due to its fully free and open source nature. Licensed under GPLv3+ and governed by Codeberg e.V., a democratic nonprofit, it has no enterprise tier, proprietary upsell, or vendor lock-in problems.

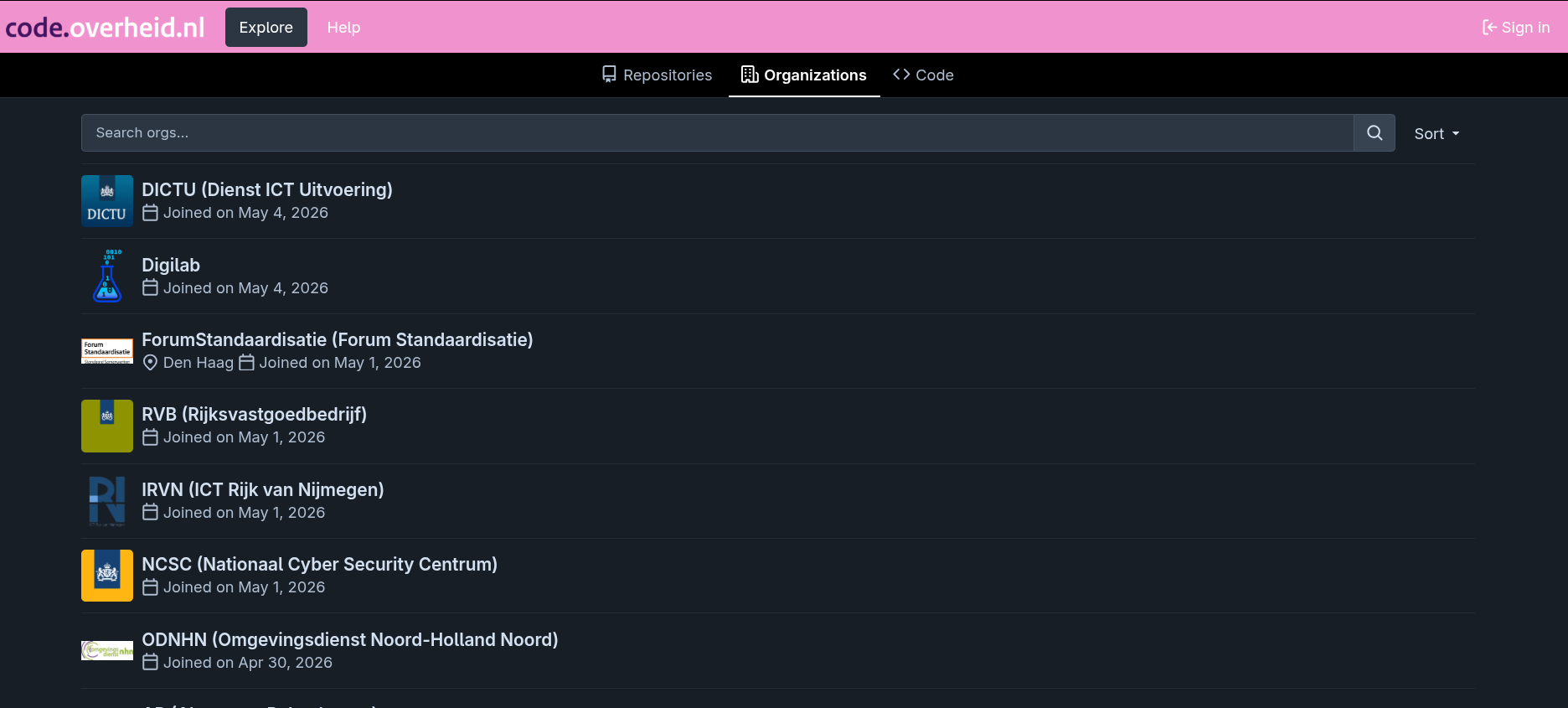

On April 24, 2026, code.overheid.nl had its soft launch, with developer advocate Tom Ootes writing about it on developer.overheid.nl. He framed it as a collective project to build something together rather than ship something finished.

The platform is a self-hosted Forgejo instance, running on Dutch government infrastructure managed by SSC-ICT (DAWO). It's free for all government organizations and is built around the following goals.

Open source development with proper Git tooling, including pull requests, issue tracking, and code reviews; government-wide collaboration to reduce duplicate development across agencies; and sovereignty through full control over the hosting environment.

As mentioned earlier, this initiative is still in the pilot phase, with the rollout being kept deliberately gradual.

Not every government organization can sign up yet, and the idea is to build it alongside the developers who will actually use it, with early participants encouraged to file issues and open pull requests on the platform itself.

What's already in?

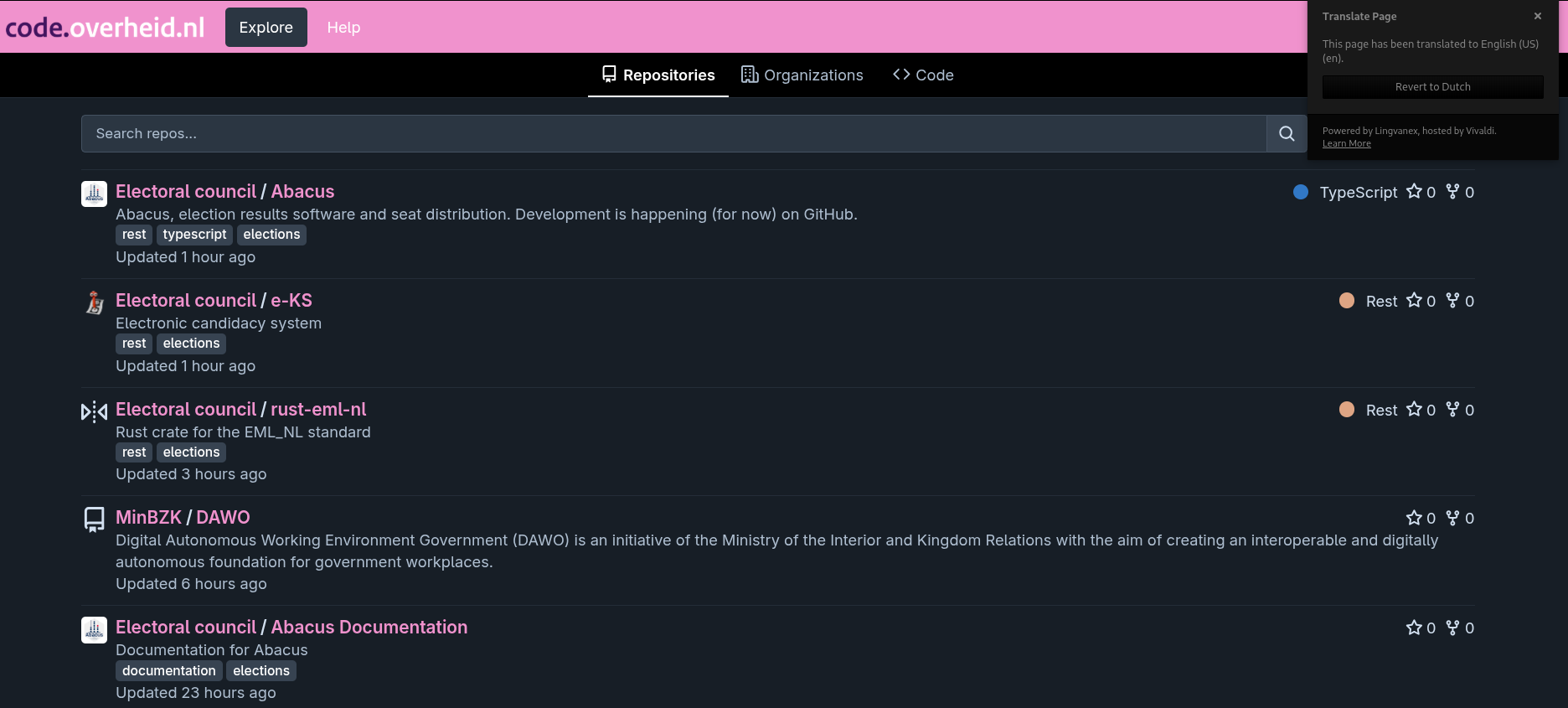

I had to translate the repos page to see what was in there.

The platform is live and already hosts some content. The most notable presence is Kiesraad, the Dutch Electoral Council, which has pushed several election-related repositories including Abacus, the software used for vote counting and seat distribution, and e-KS, an electronic candidate nomination system.

The Ministry of the Interior (BZK) has the DAWO project (their digital autonomous workplace initiative) on there, along with a DigiD source code release published under a freedom of information ruling.

On the organization side, the list of who has joined since the April 24 soft launch is telling. Multiple national ministries are already on the platform: Finance, Foreign Affairs, Agriculture, and Interior.

Several major municipalities have also signed up, including The Hague, Utrecht, Leiden, and Arnhem. For a platform still in pilot with no formal launch announcement, that's a fairly significant roster.

Suggested Read 📖: A Mobile Dev Hackathon is Coming to the Netherlands

Le 6 mai 2026 à 22:09, Bob Weinand bobwei9@hotmail.com a écrit :

Volker and I drafted a RFC:

https://wiki.php.net/rfc/scope-functions

Please consider it and share your feedback.

I hope it will alleviate pain around some of the most common forms of Closure usage which is "execute this now as part of the called function", which currently can require a lot of "use ($variables)".

For me the primary use case of use ($capturing) was always "I need this function later and want to explicitly document what escapes my function". This, however, required this straightforward usage of Closures to also document every single usage of a variable. Which is really not that beneficial at all.

Thus the scope functions as proposed will be able to fill that gap in future.

Thank you,

Bob

Hi,

This is nice. As I understand it, this RFC could resolve problems that the Context Managers RFC tries to resolve in a simpler and more flexible way. (And it resolves other problems too, of course.)

Taking the first example from the Context Manager RFC:

using (file_for_write('file.txt') => $fp) {

foreach ($someThing as $value) {

fwrite($fp, serialize($value));

}

}

// implementable as:

function file_for_write(string $filename): ContextManager {

return new class($filename) implements ContextManager {

function __construct(private readonly string $filename) { }

private $fp;

function enterContext() {

$this->fp = @fopen($this->filename, 'w');

if (!$this->fp) {

throw new \RuntimeException('Couldn’t open file');

}

return $this->fp;

}

function exitContext(?\Throwable $e = null): ?\Throwable {

@fclose($this->fp);

return $e;

}

};

}

This can be rewritten as:

file_for_write('file.txt', fn($fp) {

foreach ($someThing as $value) {

fwrite($fp, serialize($value));

}

});

// implementable as (which is simpler: one function instead of a whole class):

function file_for_write (string $filename, callable $do_write): void {

$fp = @fopen($filename, 'w');

if (!$fp) {

throw new \RuntimeException('Couldn’t open file');

}

try {

$do_write($fp);

} finally {

@fclose($fp);

}

}

For those of us that abhor exceptions in case of recoverable failure, there is even more. With this RFC, one can easily return true/false (or whatever other signal) for success/failure, while Context Manager strongly leans towards the use of exceptions (although, of course, it remains possible to assign the outcome to a variable and to exit the context with break or goto):

$ok = file_for_write('file.txt', fn($fp) {

foreach ($someThing as $value) {

if (something_is_wrong_with($value))

return false;

fwrite($fp, serialize($value));

}

return true;

});

// implementable as (which is more flexible: exceptions are not the only type of signal):

#[\NoDiscard]

function file_for_write (string $filename, callable $do_write): bool {

$fp = @fopen($filename 'w');

if (!$fp) {

return false;

}

try {

return $do_write($fp);

} finally {

@fclose($fp);

}

}

—Claude

On 4 May 2026 21:24:39 BST, Daniel Scherzer daniel.e.scherzer@gmail.com

wrote:Hi internals,

I'd like to start the discussion for a new RFC about adding a new method,

ReflectionAttribute::getCurrent(), to access the current reflection target

of an attribute."a new static method, ReflectionAttribute::getCurrent(), that, when called

from an attribute constructor, returns a reflection object corresponding to

what the attribute was applied to."This sounds like an arbitrary new rule for just this functionality. I

don't think we should have special rules for a single static method call.I believe it's useful to have something like this, but I'm not in favour

of this approach.Would it not be possible for this to be a normal (dynamic) method on the

ReflectionAttrbute object?cheers

Derick

In order to reduce the scope of the weird new method, I have updated the

RFC to split it up:

- ReflectionAttribute::getCurrent(), when called from an attribute

constructor, returns a ReflectionAttribute matching the usage (replacing

the currently-possible hacking with backtraces) - ReflectionAttribute::getReflectionTarget() is a normal (dynamic) method

returning the ReflectionAttributeTarget

These are expected to be used together in the constructors of attributes,

e.g. ReflectionAttribute::getCurrent()->getReflectionTarget(), but the

normal getReflectionTarget() method is also useful and usable elsewhere.

-Daniel

Hey Larry,

Am 19.01.2026 um 16:58 schrieb Larry Garfield larry@garfieldtech.com:

As noted in Future Scope, we can add function-based context managers as well based on generators. At the moment we're not convinced it's necessary, but it's a straightforward add-on if we find that always writing a class for a context manager is too cumbersome.

The issue with punting this behavior to user-space is that a library cannot provide this sort of functionality in a clean way.

In an ideal world, if we had auto-capturing long-closures, then I would agree this is largely unnecessary and could instead be implemented like so (to reuse the examples from the RFC):

$conn->inTransaction(function () {

// SQL stuff.

});$locker->lock('file.txt', function () {

// File stuff.

});$scope->inScope(function () {

$scope->spawn(yadda yadda);

});$errorHandlerScope->run(fn() => null, function () {

// Do stuff here with no error handling.

});And so forth. If we had auto-capturing closures, I would probably argue that is a better approach.

However, auto-capturing closures have been rejected several times, and I have no confidence that we will ever get them. (Whether you approve or disapprove of that is your personal opinion.) The current alternative involves using lots of

useclauses, which is needlessly clunky to the point that folks try to avoid it.I literally have code like this in a project right now, and I've had to do this many times:

public function parseFolder(PhysicalPath $physicalPath, LogicalPath $logicalPath, array $mounts): bool

{

return $this->cache->inTransaction(function() use ($physicalPath, $logicalPath, $mounts) {

// Lots of SQL updates here.

});

}That's just gross. :-) This is exactly the example that's been used in the past to argue in favor of auto-capturing closures, but it's never been successful.

I fully agree that this is gross. I have just created a comprehensive RFC https://wiki.php.net/rfc/scope-functions to address this underlying problem you describe.

It does address quite a few of the main issues people had with trivial auto-capturing Closures which would simply clone the symbol table.

I personally really don't like this Context Managers RFC given the apparent complexity it has (only for heavyweight usages basically, library style - you wouldn't just create ContextManager implementing classes ad hoc for everything).

Thus, I'd like to ask you to consider my RFC first and give feedback on it, and possibly - obviously only if you think my RFC is a good choice for the language - pause this RFC for as long as mine is under discussion.

Thanks,

Bob

Volker and I drafted a RFC:

https://wiki.php.net/rfc/scope-functions

Please consider it and share your feedback.

I hope it will alleviate pain around some of the most common forms of Closure usage which is "execute this now as part of the called function", which currently can require a lot of "use ($variables)".

For me the primary use case of use ($capturing) was always "I need this function later and want to explicitly document what escapes my function". This, however, required this straightforward usage of Closures to also document every single usage of a variable. Which is really not that beneficial at all.

Thus the scope functions as proposed will be able to fill that gap in future.

Thank you,

Bob

Le 22 avril 2026 20:28:15 GMT+02:00, Larry Garfield

larry@garfieldtech.com a écrit :I will stop here, however, and ask for input from the audience. (Not just the regulars in this thread of late, but all of you reading this.) Including if you have an alternate approach to the three listed above that would have notably fewer cons.

--Larry Garfield

I prefer the void return and throw if needed approach, it looks way

more understandable. I was confused by that part when reading the RFC

and really surprised that returning an Throwable on success is ignored,

which is not clear at all when reading the interface.

By which you mean the "if you do nothing, the exception is swallowed" approach? (IE, more work in the common case.)

My reluctance there is that it will become really easy to forget to propagate.

public function exitContext(?Throwable $e) {

fclose($this->fp);

}

That seems like it should be all you need, but it will also silently swallow any errors, so whatever code uses this context manager won't know if it was successful or not. That seems not-great to me.

The in-out parameter works too but is a bit weirder, and makes it

unclear what happens if exitContext throws.It's also unclear to me in the current desugarized version what happens

when exitContext throws, the reset of the context var does not happen ?

There is nothing to handle that.Côme

We'll have to clean up the desugared versions once we decide what they should actually be. :-) There's probably a bug in there at the moment.

--Larry Garfield

In PHP, the native clone keyword performs a shallow copy: nested objects

remain shared with the original instance. Deep cloning recursively clones

the full object graph so the clone shares no references with the original.

Deep cloning in PHP has traditionally relied on unserialize(serialize($value)).

Although effective, this approach is slow and memory-intensive because it breaks

copy-on-write (COW) semantics by rebuilding the entire value graph from a

serialized representation.

Symfony 8.1 introduces a new DeepCloner class in the VarExporter component

that deep-clones PHP values while preserving COW for strings and arrays. Instead

of serializing data, it reconstructs the object graph directly, making cloning

significantly faster and more memory efficient.

Basic Usage

For a one-off deep clone, use the static deepClone() method:

use Symfony\Component\VarExporter\DeepCloner;

$clone = DeepCloner::deepClone($originalObject);To clone the same prototype repeatedly, create a DeepCloner instance once.

The object graph is analyzed upfront, making subsequent clone() calls much cheaper:

$cloner = new DeepCloner($prototype);

$clone1 = $cloner->clone();

$clone2 = $cloner->clone();You can also clone the root object into a compatible class with cloneAs():

$childDefinition = (new DeepCloner($definition))

->cloneAs(ChildDefinition::class);DeepCloner instances can also be exported to arrays and restored later,

making them suitable for caching or transport across processes (json_encode(),

MessagePack, APCu, OPcache-warmed .php files, etc.). The payload is typically

30-40% smaller than serialize($value):

$payload = (new DeepCloner($graph))->toArray();

$json = json_encode($payload);

// ... store, cache or send the payload ...

$clone = DeepCloner::fromArray(json_decode($json, true))->clone();Finally, the lower-level Hydrator and Instantiator classes are

deprecated in 8.1 in favor of the single deepclone_hydrate() function which

instantiates and hydrates an object (including private, protected and readonly

properties) in a single call:

// Before (deprecated in 8.1):

$user = Instantiator::instantiate(User::class);

Hydrator::hydrate($user, ['name' => 'Alice']);

// After:

$user = deepclone_hydrate(User::class, ['name' => 'Alice']);Real-World Impact Inside Symfony

In benchmarks, DeepCloner consistently outperforms unserialize(serialize()):

it is 4x faster for typical object graphs (100 objects with a few properties each)

and up to 15x faster for graphs with many properties (50 objects with 20

properties each), while also using significantly less memory.

That's why DeepCloner is not a niche addition for VarExporter users.

Symfony 8.1 now uses DeepCloner internally in several core components:

- DependencyInjection: for cloning service definitions during container compilation;

- FrameworkBundle: when dumping the compiled container for debugging;

- Form: for cloning form data snapshots between requests;

- Cache: in the

ArrayAdapterimplementation.

As a result, Symfony applications automatically benefit from faster container compilation, lower memory usage, and more efficient in-memory caching.

Bonus: the ext-deepclone PHP Extension

Alongside DeepCloner, the Symfony team has released a new PHP extension,

symfony/php-ext-deepclone. It provides native implementations of the

deepclone_to_array(), deepclone_from_array() and deepclone_hydrate()

functions.

When the extension is installed, DeepCloner transparently uses it instead

of the userland polyfill, providing even better performance without requiring

any application changes.

Symfony 8.1.0-BETA1 has just been released.

This is a pre-release version of Symfony 8.1. If you want to test it in your own applications before its final release, run the following commands:

$ composer config minimum-stability beta

$ composer config extra.symfony.require "8.1.*"

$ composer updateThese commands assume that all your Symfony dependencies in composer.json

use * as their version constraint. Otherwise, you will need to update

the version constraints of those Symfony dependencies to 8.1.*.

Read the Symfony upgrade guide to learn more about upgrading Symfony and use the SymfonyInsight upgrade reports to detect the code you will need to change in your project.

Tip

Want to be notified whenever a new Symfony release is published? Or when a version is not maintained anymore? Or only when a security issue is fixed? Consider subscribing to the Symfony Roadmap Notifications.

Changelog Since Symfony 8.0

- data #64149 Release v8.1.0-BETA1

- data #64145 Release v8.0.10

- feature #63751 [DependencyInjection][HttpKernel] Add support for resetting non-shared services (@Pechynho)

- feature #63689 [Routing] Allow collection prefixes to disable trailing slash on root (@vvaswani)

- feature #62127 [RateLimiter] Add calendar-aligned mode to FixedWindowLimiter (@Crovitche-1623)

- bug #64128 [Messenger] Fix "--fetch-size" option rejecting valid values (@xeno-suter)

- feature #64118 [Security] Revert "Add per-username login rate-limit to prevent brute-force attacks" (@wouterj)

- bug #63976 [FrameworkBundle] Remove console service definitions already declared by ConsoleBundle (@Jean-Beru)

- feature #64094 [Messenger] Deprecate StopWorkerOnTimeLimitListener in favor of time_limit worker option (@Toflar)

- feature #64104 [Security] Add per-username login rate-limit to prevent brute-force attacks (@ayyoub-afwallah)

- feature #63862 [Console] Use ECH sequence for block padding (@Amoifr)

- feature #64087 [HttpKernel][VarDumper][WebProfilerBundle] Forward CSP nonce to dump() instead of disabling CSP (@Amoifr)

- feature #64079 [ErrorHandler] Trigger @method deprecation notices for abstract classes (@lacatoire)

- feature #46654 [Serializer] Add COLLECT_EXTRA_ATTRIBUTES_ERRORS and full deserialization path (@NorthBlue333)

- feature #62112 [Console] Add support for OSC 9;4 escape sequence for progress reporting (@canvural)

- feature #52173 [Serializer] Add AbstractObjectNormalizer::ENABLE_TYPE_CONVERSION for scalar type transformation (@Jeroeny)

- feature #60008 [HttpFoundation] Add SessionHasFlashMessage test constraint (@Pierstoval)

- feature #63810 [VarDumper] Dump class-strings as class stubs with source location and static properties (@lyrixx)

- feature #63929 [TwigBundle] Add twig.safe_class resource tag to register safe classes for the escaper (@GromNaN)

- feature #62801 [Notifier][Prelude] Add bridge (@zairigimad)

- feature #63727 [Form] Use translation_domain for expanded ChoiceType placeholder (@eyupcanakman)

- feature #63762 [VarDumper] Add CSP nonce support to HtmlDumper (@Amoifr)

- bug #63789 [ObjectMapper] Fix #[Map] attribute breaking auto-mapping of other properties (@Amoifr)

- feature #63809 [Workflow] Add support for dumping listeners in Graphviz diagrams (@lyrixx)

- feature #63879 [JsonStreamer] Add DateTimeZone value object support (@mtarld)

- feature #63905 [FrameworkBundle] Add --sort option to debug:router command (Michael Thieulin)

- feature #63907 [RateLimiter] Add #[RateLimit] attribute to rate limit controllers declaratively (@ayyoub-afwallah)

- feature #63925 [MonologBridge] Add $subjectMaxLength option to MailerHandler (@Amoifr)

- feature #63945 [Validator] Make constraint validators reentrant instead of being stateful (@stof)

- feature #64055 [Runtime] Add FRANKENPHP_RESET_KERNEL to reset the kernel between requests (@nicolas-grekas)

- feature #63988 [DependencyInjection] Support autowiring env vars as closures or Stringable when using #[Autowire(env: 'FOO')] (@nicolas-grekas)

- feature #64070 [Messenger] Release deduplication lock on definitive failure (@ousamabenyounes)

- feature #63778 [Tui] Add the component (@fabpot)

- feature #64049 [VarExporter] Deprecate Hydrator and Instantiator classes (@nicolas-grekas)

- feature #64009 Improve phpdoc types (@stof)

- feature #63910 [DependencyInjection] Allow inline Definition as factory and configurator (@GromNaN)

- bug #63962 [DependencyInjection] Fix empty bundle cache when container is rebuilt (@HypeMC)

- feature #63943 [WebProfilerBundle] Improve toolbar accessibility for screen reader (@Nitram1123)

- feature #63912 [JsonStreamer] Improve error message when unable to encode (@GaryPEGEOT)

- bug #63921 [DependencyInjection] Fix bundles cache freshness check (@HypeMC)

- feature #63695 [VarExporter] Make DeepCloner::__serialize() return a pure array and add toArray()/fromArray() (@nicolas-grekas)

- bug #63913 [ErrorHandler] Fix http_response_code() warning on PHP 8.5 for max execution time errors (@ruudk)

- feature #63880 [DependencyInjection] Add #[RequiredBundle], ServicesBundle and ConsoleBundle (@nicolas-grekas)

- bug #63765 [VarExporter] Fix serialization of objects with __serialize/__unserialize (@Amoifr)

- bug #63895 [HttpKernel] Fix cache warmers running on every kernel boot instead of only on compilation (@nicolas-grekas)

- feature #63877 [DependencyInjection] Add AddBehaviorDescribingTagsPass (@nicolas-grekas)

- feature #63875 [EventDispatcher] Add hot-path and no-preload support to AddEventAliasesPass (@nicolas-grekas)

- bug #63863 [Console] Allow nullable #[Autowire] service references in command arguments (@ruudk)

- feature #63710 [DependencyInjection] Add Kernel and Bundle infrastructure for HTTP-less DI-powered apps (@nicolas-grekas)

- feature #63771 [Security] Add enforce_key_usage_verification option to OIDC discovery (@ruudk)

- feature #60334 [Twig] Add daisyUI form layout (@Oviglo)

- feature #63742 [JsonStreamer] Add DateInterval value object support (@mtarld)

- feature #63745 [FrameworkBundle][HttpKernel] Deprecate Bundle::registerCommands() (@derrabus)

- feature #63735 [JsonStreamer] Support date time timezone (@mtarld)

- feature #63714 [Console] Add validation constraints support to #[MapInput] (@chalasr)

- bug #63704 [VarExporter] Fix DeepCloner crash with objects using __serialize() (@pcescon)

- feature #63709 [Console] Add optional PSR container parameter to Application (@nicolas-grekas)

- feature #57598 [Console] Expose the original input arguments and options and to unparse options (@theofidry)

- feature #52134 [HttpKernel] Add option to map empty data with MapQueryString and MapRequestPayload (@Jeroeny)

- feature #41574 [Messenger] Add AmqpPriorityStamp for per-message priority on AMQP transport (Valentin Nazarov)

- feature #63661 [Runtime] Add FrankenPhpWorkerResponseRunner for simple response return (@guillaume-sainthillier)

- feature #63666 [Messenger] Allow configuring the service reset interval in the messenger:consume command via the --no-reset option (@nicolas-grekas)

- feature #63665 [Messenger] Add MessageExecutionStrategyInterface and refactor Worker to use it (@nicolas-grekas)

- feature #63662 [Messenger] Add a --fetch-size option to the messenger:consume command to control how many messages are fetched per iteration (@nicolas-grekas)

- feature #63663 [Contracts] Add ContainerAwareInterface (@nicolas-grekas)

- feature #63631 [Form] Add labels option to DateType to customize year/month/day sub-field labels (@guillaumeVDP)

- feature #62823 [JsonPath] Add custom function support (@alexandre-daubois)

- bug #63640 [VarExporter] Fix readonly deep-cloning and Instantiator cache priming (@nicolas-grekas)

- feature #63590 [DependencyInjection] Add support for using service stacks as decorators (@nicolas-grekas)

- feature #63595 [ObjectMapper] Add IsNotNull built-in condition (@Nayte91)

- feature #63612 [VarExporter] Add DeepCloner for COW-friendly deep cloning (@nicolas-grekas)

- feature #51379 [HttpKernel] Adding new #[MapRequestHeader] attribute and resolver (@StevenRenaux)

- feature #63585 [Security] Deprecate erase_credentials config, container parameter and AuthenticatorManager constructor argument (@guillaumeVDP)

- feature #62029 [Mailer][SES] Allow configuring port and tls options (@adars)

- feature #63615 [Uid] Pass invalid UID value to InvalidArgumentException for better debug (@rela589n)

- feature #63339 [JsonStreamer] Handle value objects (@mtarld)

- feature #63383 [ObjectMapper] Allow class FQDN arrays as TargetClass and SourceClass param (@rrajkomar)

- feature #63520 [FrameworkBundle] Allow configuring Webhook's header names and signing algo (@lacatoire)

- feature #63593 [Uid] Add Uuid47Transformer support for UUIDv7/v4 conversion (@nicolas-grekas)

- feature #63528 [TypeInfo] Resolve tentative return types (@valtzu)

- feature #63546 [Console] Add outline-style block methods to SymfonyStyle (@guillaumeVDP)

- feature #63581 [FrameworkBundle][Messenger] Deprecate "senders" nesting level in routing config (@W0rma)

- feature #63441 [HttpClient] Default CachingHttpClient's $maxTtl to 86400s to prevent eternal cache items (@Lctrs, @nicolas-grekas)

- bug #63597 [FrameworkBundle] Fix wiring of "debug.console.argument_resolver" (@nicolas-grekas)

- feature #63541 [HttpFoundation] Deprecate setting public properties of Request and Response objects directly (@nicolas-grekas)

- feature #63552 [Runtime] Add SymfonyRuntime::resolveType() for customizing how types are resolved in extending runtimes (@Kingdutch)

- feature #63481 [ErrorHandler] Allow namespace remapping in DebugClassLoader to relax the "same vendor" constraint (@mpdude)

- feature #63524 [Translation][Crowdin] Replace deprecated Upload Translations method with Import Translations (@bhdnb)

- feature #63505 [FrameworkBundle] Deprecate terminate_on_cache_hit http_cache option (@Mynyx)

- feature #63538 [Console] Sort suggested commands alphabetically (@javiereguiluz)

- feature #63537 [Console] Replace executeCommand() by runCommand() when testing commands (@javiereguiluz)

- feature #45553 [Translation] Extract locale fallback computation into a dedicated class (@mpdude)

- feature #63433 [HttpClient] Add GuzzleHttpHandler that allows using Symfony HttpClient as a Guzzle handler (@nicolas-grekas)

- feature #63465 [Translation] Add support for XLIFF PGS (Plural, Gender, and Select Module) (@alexandre-daubois)

- feature #62888 [Messenger] Route decode failures through failure handling (@nicolas-grekas)

- feature #63453 [Console][WebProfilerBundle] Trace argument value resolvers (@chalasr)

- feature #52055 [Console] Deprecate combining incompatible mode flags in InputArgument and InputOption (@jnoordsij)

- feature #52058 [Console] Allow setting a boolean default value on InputOption::VALUE_NEGATABLE options (@jnoordsij)

- feature #45081 [Form] Add support for submitting forms with unchecked checkboxes in request handlers (@filiplikavcan)

- feature #63443 [Console] Add customization to SymfonyStyle progressBar (@guillaumeVDP)

- feature #63451 [PropertyInfo] Add support for property hook settable types (@alexandre-daubois)

- feature #63455 [Translation] Add support for XLIFF versions 2.1 and 2.2 (@alexandre-daubois)

- feature #63427 [WebLink] : Adding consts for as attributes (@ThomasLandauer)

- feature #63426 [DependencyInjection] Deprecate named autowiring alias that don't use #[Target] (@nicolas-grekas)

- feature #63429 [FrameworkBundle] Add MicroKernelTrait::$allowedEnvs to enforce allowed values for APP_ENV (@vincentpabst)

- bug #63402 [Messenger] Improve PostgreSQL LISTEN/NOTIFY idle listener (@nicolas-grekas)

- feature #61494 [Console] Allow to test the different streams at the same time with a new result-based testing API for CommandTester (@theofidry)

- feature #63371 [Form] Add uid_format option to EntityType (@lacatoire)

- feature #61462 [Messenger] Add ListableReceiverInterface support to RedisReceiver (@mudassaralichouhan)

- feature #49388 [CssSelector] Add :has() support (@franckranaivo)

- feature #63242 [FrameworkBundle] Add decoration stack to debug:container command (@ayyoub-afwallah)

- bug #63385 [Contracts] skip property hook check on PHP < 8.4 (@xabbuh)

- feature #63373 [HttpFoundation] Add BinaryFileResponse::shouldDeleteFileAfterSend() (@lacatoire)

- feature #49518 Create #[Serialize] Attribute to serialize Controller Result (@Koc)

- feature #63355 [Validator] Add ValidatorBuilder::enablePropertyMetadataExistenceCheck() (@lacatoire)

- feature #63335 [TwigBridge] Add form_flow_* helper functions (@ker0x)

- feature #63356 [FrameworkBundle] Configure custom marshaller per cache pool (@mvanduijker)

- feature #63359 [Console] Allow passing Validator constraints to QuestionHelper and #[Ask] (@chalasr)

- feature #54817 [HttpKernel] Support variadic with #[MapRequestPayload] (@DjordyKoert)

- feature #63360 [Validator] Add clock-awareness to comparison and range validators (@lacatoire)

- bug #63361 [FrameworkBundle] Properly set tag "lock.store" on flock and semaphore stores when they're used (@nicolas-grekas)

- feature #63346 [Messenger][AMQP] Allow disabling default queue binding via queues option (@xammmue)

- feature #52265 [HttpClient] Configure MockClient if mock_response_factory has been set on a scoped client (@tarjei)

- feature #63293 [Console] Add image support to QuestionHelper and #[Ask] (@chalasr)

- feature #47969 [Filesystem] rename Filesystem::mirror() option copy_on_windows to follow_symlinks (@maxbeckers)

- feature #58871 [Form] BirthdayType has automatic attr when widget is single_text (@nicolas-grekas)

- feature #59202 [Semaphore] Add a semaphore store based on locks (@alexander-schranz)

- bug #63341 [WebProfilerBundle] fix compatibility with HttpKernel component < 8.1 (@xabbuh)

- feature #47666 [Messenger] Move PostgreSQL LISTEN/NOTIFY blocking to worker idle event listener (@d-ph)

- feature #62638 [DependencyInjection] Add tag decoration support (@mtarld)

- feature #62320 [WebProfiler] add cURL copy/paste to request tab (@darkweak)

- feature #63214 [Form] Allow ViolationMapperInterface injection for ValidatorExtension and FormTypeValidatorExtension (@ktherage)

- feature #63304 [Contracts][DependencyInjection] Support hooked properties in ServiceMethodsSubscriberTrait (@derrabus)

- feature #63277 [Messenger] Add idle timeout option to BatchHandlerTrait (@HypeMC)

- feature #61458 [HttpKernel] Validate typed request attribute values before calling controllers (@mudassaralichouhan)

- feature #63274 [HttpKernel] Add ControllerEvent::evaluate() et al. to help with evaluating expressions or closures in controller attributes (@nicolas-grekas)

- feature #63032 [HttpKernel] Dispatch events named after controller attributes (@nicolas-grekas)

- feature #63263 [FrameworkBundle][Lock] Scope semaphore and flock stores by project dir by default (@nicolas-grekas)

- feature #61457 [FrameworkBundle] Deprecate container parameters router.request_context.scheme and .host (@stollr)

- feature #63092 [HttpKernel] Expose controller metadata throughout the request lifecycle (@nicolas-grekas)

- feature #58273 Allow usage of expressions for defining validation groups in #[MapRequestPayload] (@Brajk19)

- feature #63201 [Security] Add this to #[IsGranted] subject expression vars when available (@valtzu)

- bug #63256 [Validator] Fix Xml constraint behavior when ext-simplexml/ext-dom are not installed (@nicolas-grekas)

- feature #63181 [DependencyInjection] Add target parameter to #[AsAlias] attribute (@ayyoub-afwallah)

- feature #53559 [DomCrawler] Allow to add choices to single select (@norkunas)

- feature #53998 [Security] Add retrieval of parent role names (@PierreCapel)

- feature #62875 [Messenger] Add regex support for transport name matching in messenger:consume command (@santysisi)

- feature #58732 [Finder] Allow finder to set the UNIX_PATH flag when recursing directories (Shane McKinley)

- feature #62754 [ExpressionLanguage] Add support for null-safe syntax for array access (@cancan101)

- feature #57365 [Validator] Add Xml constraint (@sfmok)

- feature #58966 [Serializer] Trigger deprecation when could not parse date with default format (@alexndlm)

- feature #57769 [Notifier] [Telegram] Add support for local API server (@ahmedghanem00)

- feature #57653 [FrameworkBundle] Allow default action in HtmlSanitizer configuration (@Neirda24)

- feature #54866 [Messenger] Added the ability to force RedisCluster (@adideas)

- feature #63130 [Console] Add TesterTrait::assertCommandFailed and TesterTrait::assertCommandIsInvalid helpers (@darovic)

- feature #63095 [FrameworkBundle] Add shortcut to run console command in tests (@Koc)

- feature #63097 [Security] Decouple SameOriginCsrfTokenManager from event listening (@jprivet-dev)

- feature #62599 [FrameworkBundle][JsonStreamer] Add default options (@mtarld)

- feature #63061 [Messenger] Add support for custom type in Serializer Transport (@lyrixx)

- feature #62885 [TypeInfo] Allow resolving object shapes (@bnf)

- feature #63183 [Console] Allow object as default for input options and arguments (@chalasr)

- feature #63157 [Security] Add support for enums in SignatureHasher::computeSignatureHash() (@nicolas-grekas)

- feature #63024 [ObjectMapper] Add targetClass on MapCollection transform (@soyuka)

- feature #63111 [DependencyInjection] Add argument exclude to ContainerConfigurator::import() (@tilaven)

- feature #63135 [Scheduler] Add option to order recurring messages by next run date in debug command (@yoye)

- feature #62434 [HttpKernel] deprecate the Extension class (@xabbuh)

- feature #63059 [Semaphore] Add SemaphoreKeyNormalizer (@clegginabox)

- feature #62909 [FrameworkBundle] Enable mocking non-shared services in tests (@HypeMC)

- feature #62751 [HttpClient] Add support of the persistent cURL handles (@Koc)

- feature #63121 [Translation][FramworkBundle] Allow using env vars in the list of enabled locales (@nicolas-grekas)

- feature #62566 [FrameworkBundle] Add AbstractController::createFormFlowBuilder (@silasjoisten)

- feature #62917 [Console] Add ArgumentResolver (@chalasr)

- feature #63090 [HttpKernel] Return attributes as a flat list when using Controller[Arguments]Event::getAttributes(*) (@nicolas-grekas)

- feature #63039 [PhpUnitBridge] add logic for checking for dist.xml, xml.dist and xml phpunit config (@gennadigennadigennadi)

- feature #62941 [HttpKernel] Allow the Cache attribute to be applied conditionally (@HypeMC)

- feature #62511 [ObjectMapper] merge nested properties when targeting the same class (@soyuka)

- bug #63050 [ObjectMapper] improve test expectations (@soyuka)

- feature #62835 [DependencyInjection] Allow environment variables with . in them (Max Baldanza)

- bug #63028 [FrameworkBundle] Fix debug:container --tag=service.tag not showing priority of #[AsTaggedItem] (@ayyoub-afwallah)

- feature #62957 [ObjectMapper] Throw exception for invalid transform callable (@calm329, @Asenar)

- feature #62522 [FrameworkBundle][ObjectMapper] Add automatic class-map array based on map attributes (@Orkin)

- feature #63023 [Uid] Add argument $format to Ulid::isValid() (@mms-uret)

- feature #62854 [HttpClient] Add support for the max_connect_duration option (@alexandre-daubois)

- feature #62940 [HttpKernel] Allow using closures with the Cache attribute (@HypeMC)

- feature #62925 [HttpKernel] Deserialize UploadedFile for #[MapRequestPayload] (@Grafikart)

- feature #62911 [Console] Add #[AskChoice] attribute for interactive choice questions (@yceruto)

- feature #62939 [HttpKernel] Improve Cache attribute expression vars (@HypeMC)

- feature #62850 [HttpKernel] Decouple controller attributes from source code and add ResponseEvent::getControllerAttributes() (@nicolas-grekas)

- feature #62567 [Console] Add support for method-based commands (@yceruto)

- feature #62538 [HttpKernel] Add support for SOURCE_DATE_EPOCH environment variable (Charles-Édouard Coste)

- feature #62824 [DependencyInjection][SecurityBundle] Leverage #[AsTaggedItem] for voters (@ayyoub-afwallah)

- feature #62339 [DependencyInjection] Deprecate default index/priority methods when defining tagged locators/iterators (@nicolas-grekas)

- feature #62792 [DoctrineBridge] Deprecate RegisterMappingsPass::$aliasMap (@GromNaN)

- feature #62800 [HttpKernel] Add support for bundles as compiler passes (@yceruto)

- feature #62578 [Form] Add reset button in NavigatorFlowType (@Nayte91)

- feature #62572 [Messenger] Use clock in DelayStamp and RedeliveryStamp instead of native time classes and methods (@bluemmb)

- feature #62483 [DependencyInjection] Deprecate invalid options when using from_callable (@yoeunes)

- feature #60372 [Mailer][SendGrid] add support for scheduling delivery via send_at API parameter (Mickael GOETZ, @MrMitch)

- feature #62322 [Security] add missing Clear-Site-Data directives (@xabbuh)

- feature #62390 [Mailer] Add support of ipPoolId for infobip mailer transport (@jbdelhommeau)

Symfony 6.4.38 has just been released.

Read the Symfony upgrade guide to learn more about upgrading Symfony and use the SymfonyInsight upgrade reports to detect the code you will need to change in your project.

Tip

Want to be notified whenever a new Symfony release is published? Or when a version is not maintained anymore? Or only when a security issue is fixed? Consider subscribing to the Symfony Roadmap Notifications.

Changelog Since Symfony 6.4.37

- data #64146 Release v6.4.38

- bug #63761 [Scheduler] Fix checkpoint state expiring when cache has default TTL (@Amoifr)

- bug #64138 [Translation] Fix TranslationPushCommand::getDomainsFromTranslatorBag (@MatTheCat)

- bug #63757 [Messenger][AmazonSqs] Do not override queue-level DelaySeconds when no DelayStamp is set (@psantus)

- bug #64122 [Cache] Ensure compatibility with Relay extension 0.22.0 (@nicolas-grekas)

- bug #64119 [Yaml] fix flow collection drops &anchor and !!str &anchor items (@ousamabenyounes)

- bug #64086 [Messenger] Move --time-limit handling to Worker for proper capping with --sleep (@Toflar)

- bug #64100 [Translation] URL-encode tmp path in XliffUtils::shouldEnableEntityLoader (@ousamabenyounes)

- bug #64095 [RateLimiter] Carry over reserved tokens past fixed window resets (@ousamabenyounes)

- bug #64099 [Ldap] Cast default_socket_timeout to int (@ousamabenyounes)

Symfony 8.0.10 has just been released.

Read the Symfony upgrade guide to learn more about upgrading Symfony and use the SymfonyInsight upgrade reports to detect the code you will need to change in your project.

Tip

Want to be notified whenever a new Symfony release is published? Or when a version is not maintained anymore? Or only when a security issue is fixed? Consider subscribing to the Symfony Roadmap Notifications.

Changelog Since Symfony 8.0.9

- data #64145 Release v8.0.10

- bug #63761 [Scheduler] Fix checkpoint state expiring when cache has default TTL (@Amoifr)

- bug #64141 [DependencyInjection] Fix lazy-autowiring an already-lazy service (@nicolas-grekas)

- bug #64138 [Translation] Fix TranslationPushCommand::getDomainsFromTranslatorBag (@MatTheCat)

- bug #63744 [Validator] Fix Compound constraint with nested Composite and validation groups (@Amoifr)

- bug #63757 [Messenger][AmazonSqs] Do not override queue-level DelaySeconds when no DelayStamp is set (@psantus)

- bug #64122 [Cache] Ensure compatibility with Relay extension 0.22.0 (@nicolas-grekas)

- bug #64119 [Yaml] fix flow collection drops &anchor and !!str &anchor items (@ousamabenyounes)

- bug #64016 [Mailer][AzureMailer] Fix inline attachments (@kohlerdominik)

- bug #64086 [Messenger] Move --time-limit handling to Worker for proper capping with --sleep (@Toflar)

- bug #63858 [Cache] Remove conflict with dbal<4.3 (@flack)

- bug #64103 [AssetMapper] Stop baking CSP nonce into the importmap polyfill body (@ousamabenyounes)

- bug #64106 [Config] Normalize backed-enum in array shapes (@MatTheCat)

- bug #64100 [Translation] URL-encode tmp path in XliffUtils::shouldEnableEntityLoader (@ousamabenyounes)

- bug #64095 [RateLimiter] Carry over reserved tokens past fixed window resets (@ousamabenyounes)

- bug #64111 [Serializer] Fix low deps (@nicolas-grekas)

- bug #64099 [Ldap] Cast default_socket_timeout to int (@ousamabenyounes)

Symfony 7.4.10 has just been released.

Read the Symfony upgrade guide to learn more about upgrading Symfony and use the SymfonyInsight upgrade reports to detect the code you will need to change in your project.

Tip